Data Evaluation: What it is, Key Methods, and Real-World Applications

Bad data can cost businesses. According to Gartner, it drains organizations an average of $12.9 million annually, driving misinformed decisions and compliance risks. In a time when AI, financial forecasting, and business strategies rely on clean data, evaluating and getting it right is now more critical than ever.

But what exactly is data evaluation? And how can businesses be sure their data is working for them—not against them? This article explores the key methods for assessing data quality and its real-world applications. Read on!

What is Data Evaluation?

Data evaluation involves assessing accuracy, relevance, consistency, and usability to ensure reliable decision-making. It determines whether the information is structured, meaningful, and aligned with business objectives.

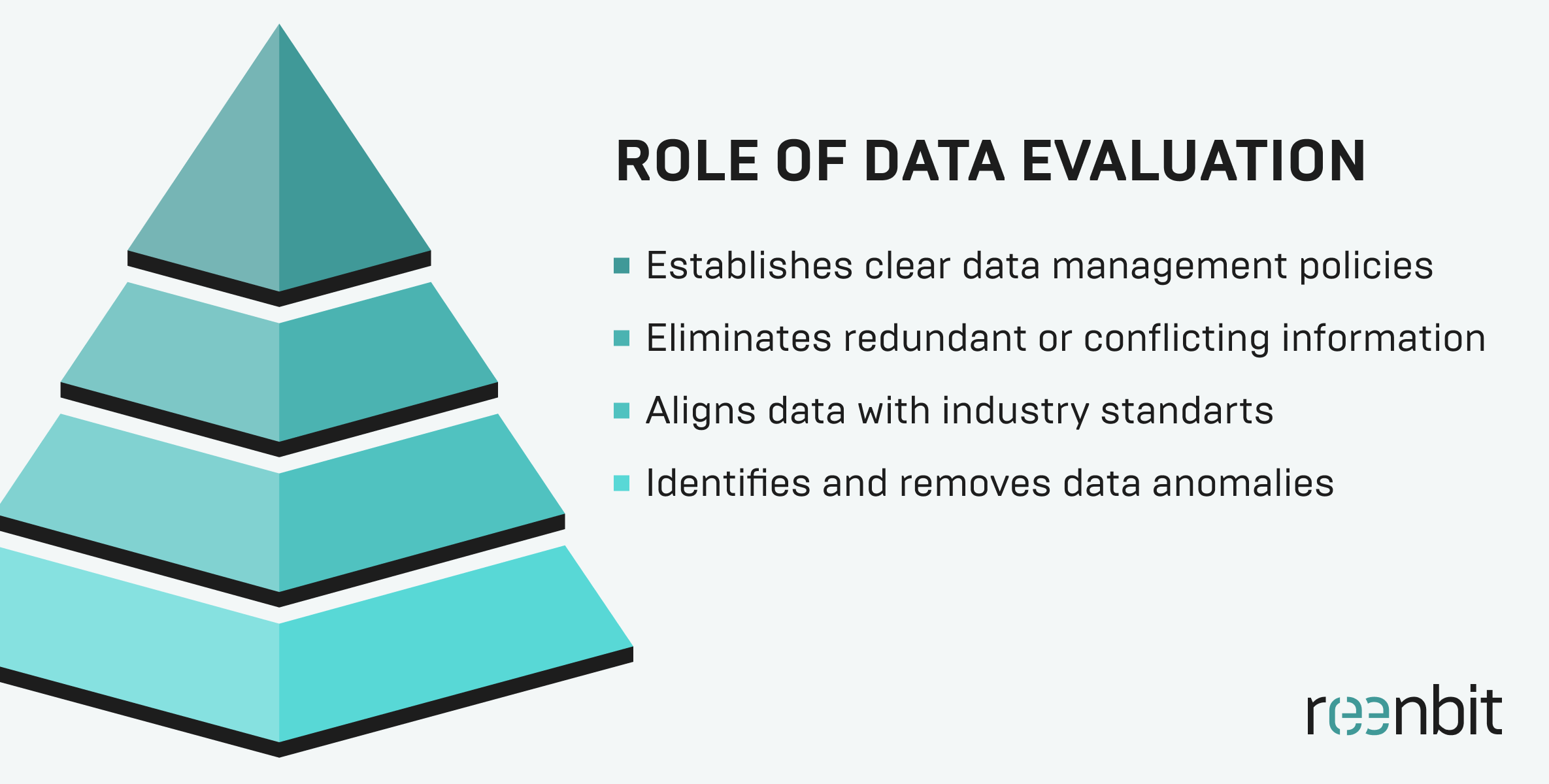

Role of Data Evaluation in Data Quality

High-quality data is essential for business intelligence, AI models, and regulatory compliance. Without evaluation, inaccurate, redundant, or outdated data can distort insights and lead to costly errors.

Key benefits of data evaluation in data quality:

- Prevents Data Corruption – Identifies and removes anomalies before they affect reports.

- Ensures Regulatory Compliance – Aligns data with GDPR, HIPAA, and other industry standards.

- Enhances Usability – Eliminates redundant or conflicting information.

- Improves Data Governance – Establishes clear data collection and management policies, following frameworks like DAMA International for best practices.

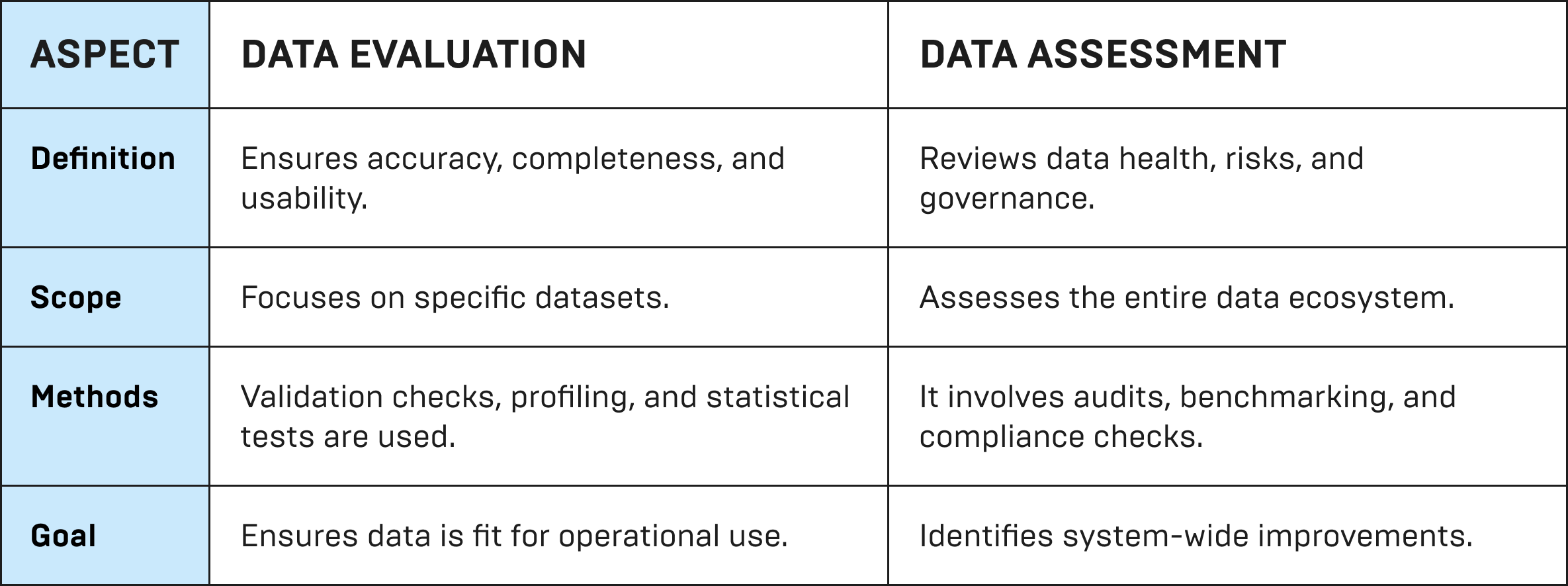

Difference Between Data Evaluation and Data Assessment

Though similar, these terms have distinct roles:

Key Takeaway: Data evaluation is a subset of data assessment, focusing on individual datasets rather than the overall governance framework.

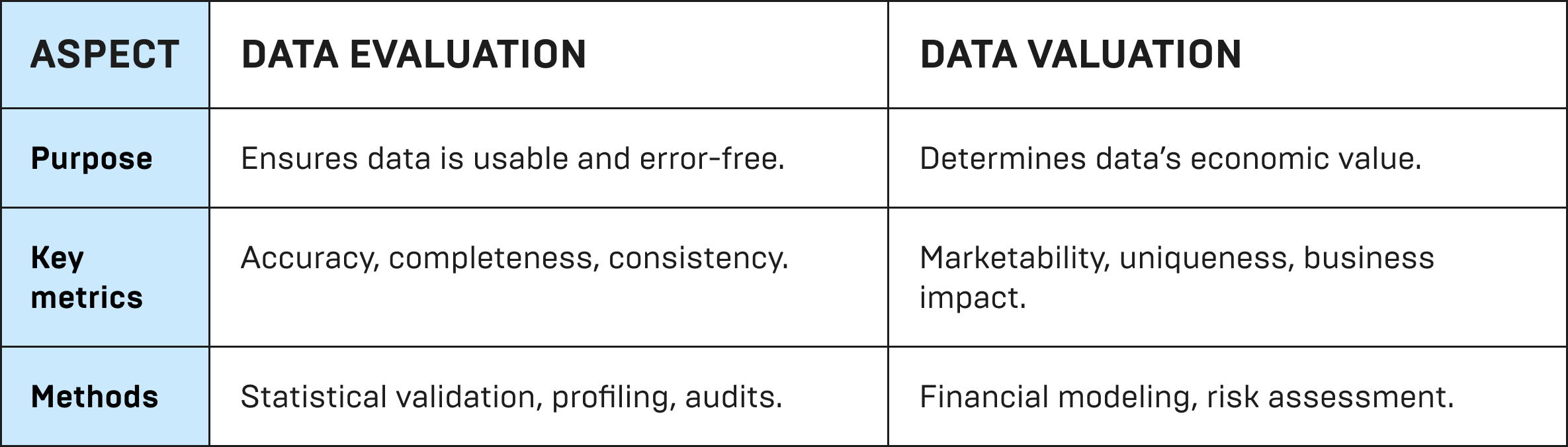

Data Evaluation vs. Data Valuation

Data valuation determines the financial worth of data, while evaluation ensures quality and usability.

Why is Data Evaluation Important?

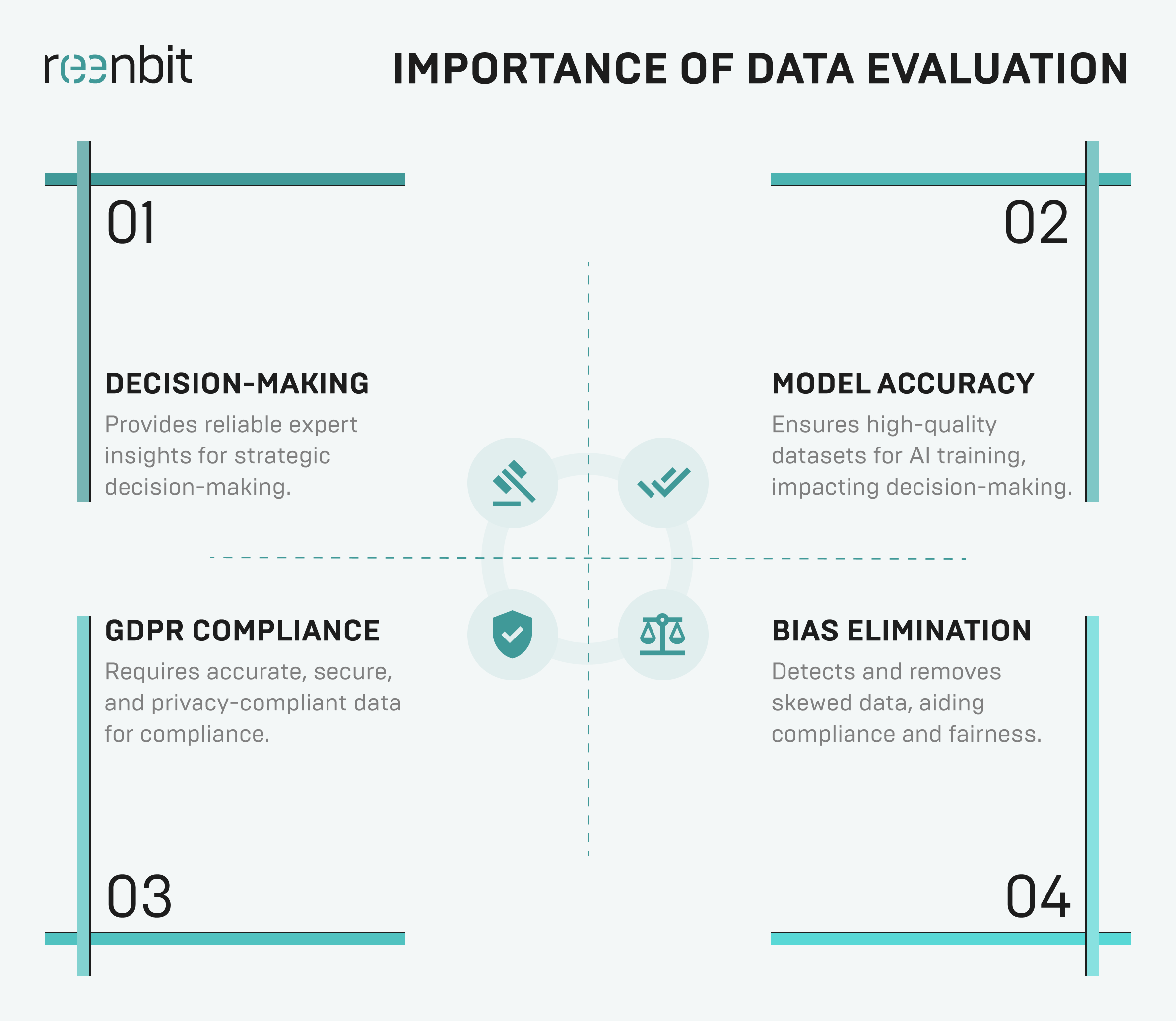

Here are some of the top reasons why data quality evaluation is essential.

Business Use Cases

These include:

- Decision-Making – Provides reliable expert insights for strategy.

- Customer Experience – Ensures accurate personalization.

- Fraud Detection – Identifies suspicious activity.

- Supply Chain Optimization – Prevents stockouts and inefficiencies.

- Healthcare & Research – Ensures accurate patient records and clinical data.

AI & Machine Learning Applications

These include:

- Bias Elimination – Detects and removes skewed data.

- Model Accuracy – Ensures high-quality datasets for AI training.

- Real-Time Decision-Making – Enables predictive analytics.

- Supply Chain Optimization – Prevents stock-outs and inefficiencies.

- Error Minimization – Reduces false positives/negatives.

Compliance & Regulations

Legal compliance and consumer protection rely on accurate, well-managed data. Organizations that handle inaccurate or mishandled data without proper evaluation risk fines, reputational damage, and security breaches. To prevent this, businesses adhere to global standards like ISO, which establish the best data integrity, security, and compliance practices.

Key regulations:

- GDPR – Requires accurate, secure, and privacy-compliant data.

- CCPA – Mandates consumer data validation and security.

- HIPAA – Ensures healthcare data integrity.

- FINRA & ISO 27001 – Standardizes data governance.

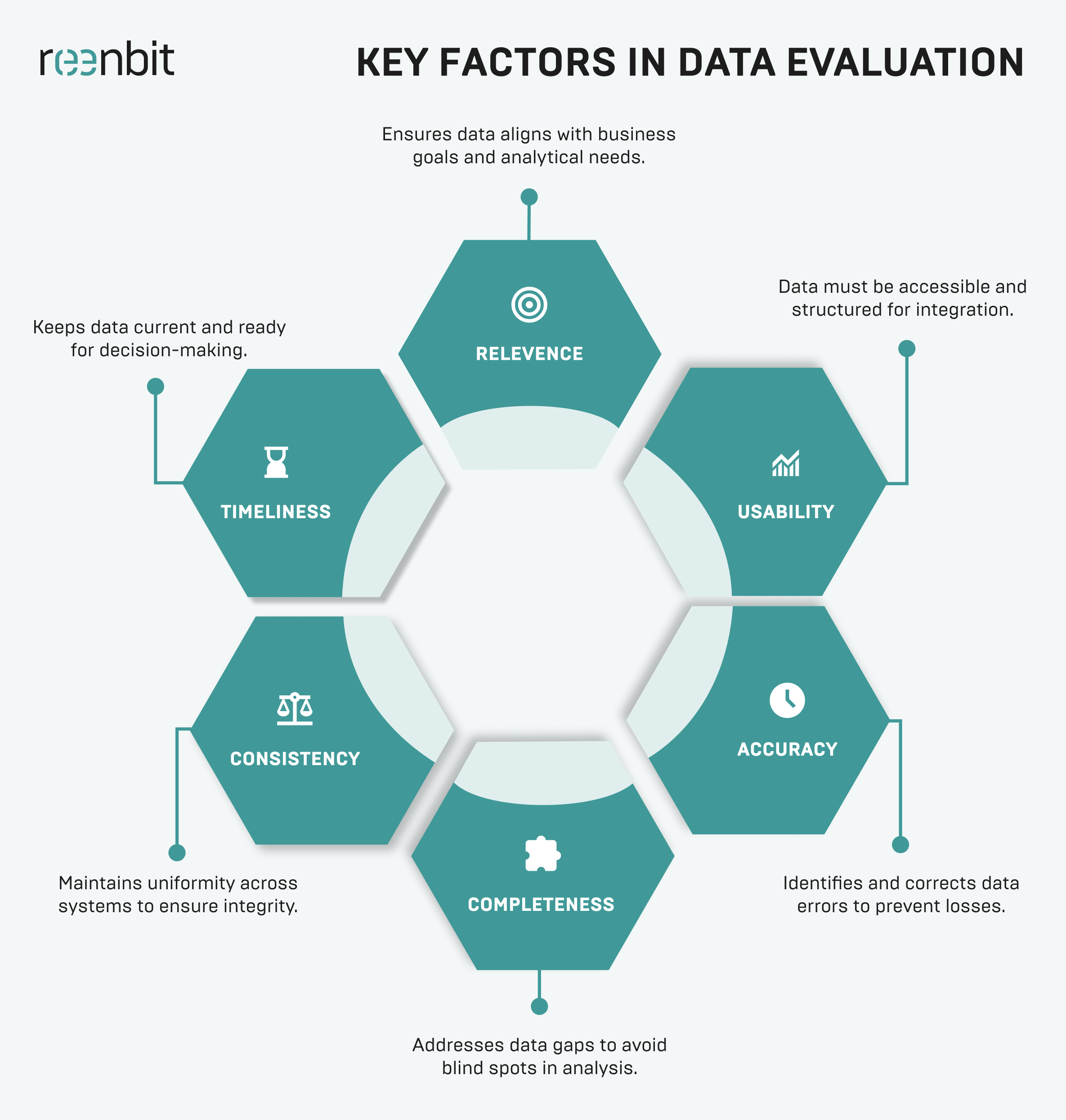

Key Factors in Data Evaluation

Businesses focus on evaluating data across the following six key factors to maintain reliability, consistency, and usability.

Relevance

Even accurate data is useless if it doesn’t serve a purpose. Organizations must align data with business goals and analytical needs, filtering out outdated or redundant records to avoid misguided decisions.

Usability

Data must be accessible, structured, and interoperable across systems. Structured formats like databases and APIs are far easier to integrate than PDFs or scanned documents. For example, a business collecting multilingual customer feedback must standardize translations to avoid extracting poor-quality insights.

Accuracy

Flawed data leads to poor decisions and financial losses. Businesses can use mathematical processes such as statistical modeling, trend analysis, and anomaly detection to identify errors before they impact decision-making.

Completeness

Missing data creates blind spots in an analysis process. Businesses must decide whether to fill gaps with statistical models, retrieve missing information, or discard incomplete records. In healthcare, incomplete patient histories can result in misdiagnoses.

Consistency

Data must remain uniform across systems to prevent reporting errors and miscommunication. If a customer’s details differ across databases, it leads to duplicate records and operational confusion. Standardizing formats, naming conventions, and measurement units ensures data integrity.

Timeliness

Real-time data is essential in fraud detection, cybersecurity, and logistics, where outdated information can lead to costly mistakes. Businesses must balance speed and accuracy to keep data relevant without overwhelming systems.

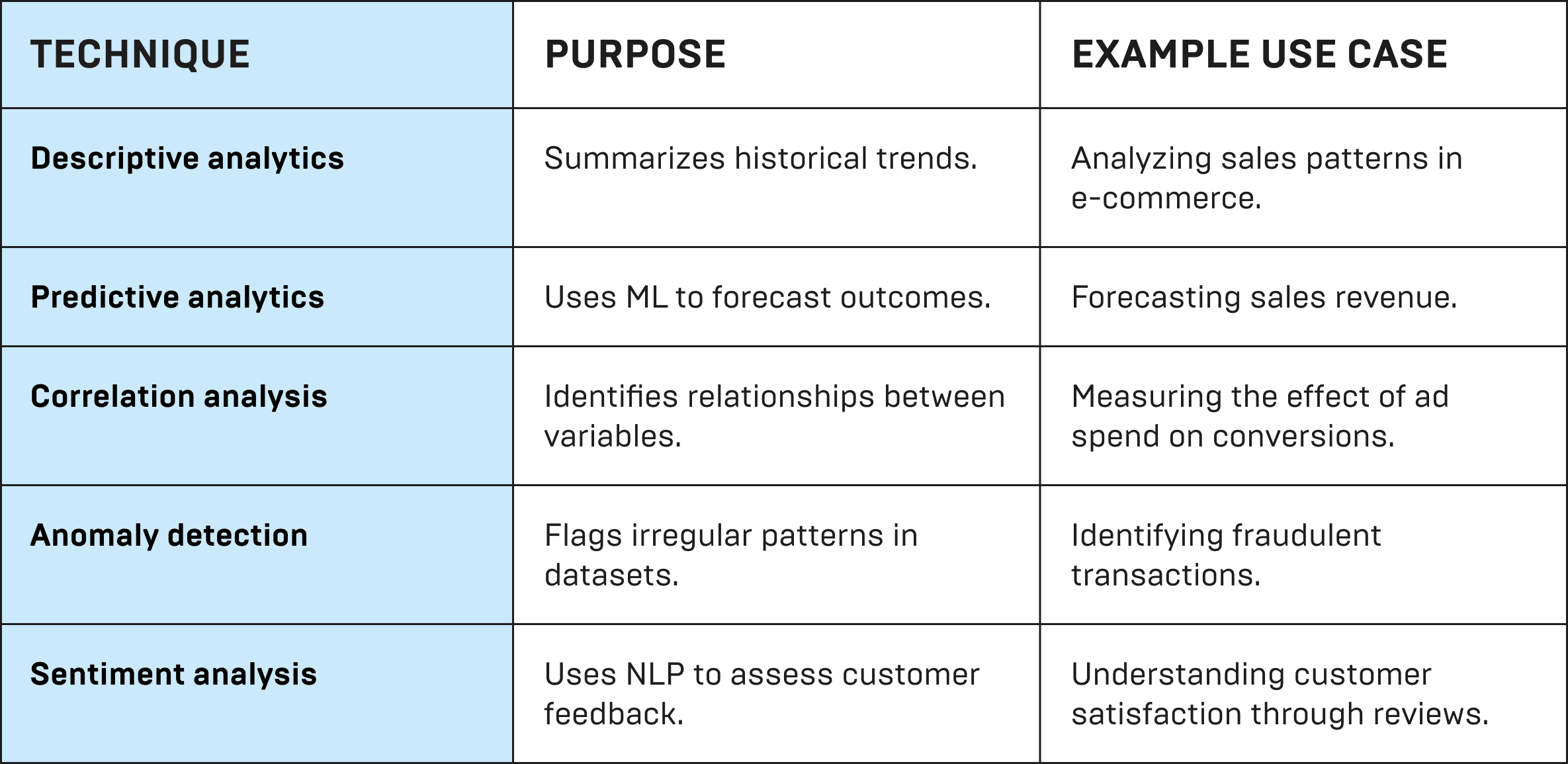

Data Evaluation Methods

With knowledge of how to evaluate data, businesses can ensure accuracy, relevance, and compliance. Here is a comparison of the commonly used analysis techniques.

Quantitative vs. Qualitative Analysis

- Quantitative evaluation relies on numbers, statistical models, and anomaly detection for objective analysis. It’s ideal for financial reporting, machine learning, and risk assessment, such as tracking sales revenue trends over time.

- Qualitative evaluation uses expert judgment, sentiment analysis, and relevance checks to assess data in context. Unlike quantitative methods, it interprets abstract concepts like customer sentiment, brand perception, and cultural trends. It relies on expert insights rather than purely statistical validation.

Manual vs. Automated Data Evaluation

- Manual evaluation means people check the data themselves. It works well for small sets of data, audits, or when expert judgment is needed. But it takes a lot of time and can lead to mistakes—like when someone has to read through every survey answer.

- Automated evaluation uses AI and sets rules to check large amounts of data quickly. It’s good for real-time tracking and spotting fraud. It’s faster and more accurate, but people are still needed to check unusual results and make sure the system works correctly.

Data Profiling & Auditing

- Data profiling detects errors, inconsistencies, and patterns through format checks, column profiling, and pattern analysis—for example, it can identify inconsistent ZIP codes in databases.

- Data auditing ensures compliance and security using both data engineering and evaluation. It helps organizations meet GDPR, HIPAA, and financial regulations, such as verifying patient record privacy in healthcare.

Challenge

Solution

High Volume – Data is too vast for manual checks.

AI-powered validation & cloud analytics automate processing.

Data Variety – Structured and unstructured formats complicate integration.

Standardization techniques, AI categorization, and data engineering tools improve data transformation.

Real-time Processing – Instant monitoring is required.

Machine learning enables continuous anomaly detection.

Security & Compliance – Regulatory standards demand data integrity.

Automated compliance checks and encryption enhance security.

Challenge

High Volume – Data is too vast for manual checks.

SOLUTION

AI-powered validation & cloud analytics automate processing.

Challenge

Data Variety – Structured and unstructured formats complicate integration.

SOLUTION

Standardization techniques, AI categorization, and data engineering tools improve data transformation.

Challenge

Real-time Processing – Instant monitoring is required.

SOLUTION

Machine learning enables continuous anomaly detection.

Challenge

Security & Compliance – Regulatory standards demand data integrity.

SOLUTION

Automated compliance checks and encryption enhance security.

How to Analyze and Evaluate Data

A structured approach to data and evaluation ensures data accuracy, relevance, and reliability in decision-making. The process involves defining objectives, collecting reliable data, refining datasets, applying analytical techniques, and validating findings.

Step 1: Define the Purpose

Clearly defining the objective prevents irrelevant insights and wasted effort. Organizations should determine the following:

- Business Goals: What decision will this data support?

- Key Metrics: What KPIs or benchmarks apply?

- Target Users: Who will use these insights (executives, analysts, customers)?

- Regulatory Compliance: Are there legal requirements to follow?

Step 2: Identify & Collect Data

Data must come from reliable, relevant sources to ensure accuracy and completeness.

Source type

Example

Internal

CRM systems, ERP, and financial reports.

External

Government databases, industry reports, and APIs.

User-generated

Customer reviews, multiple-choice surveys, behavioral data.

Machine data

IoT sensors, AI logs, and website interactions.

Governmental

Public sector data from sources like Data.gov.

Source type

Internal

Example

CRM systems, ERP, and financial reports.

Source type

External

Example

Government databases, industry reports, and APIs.

Source type

User-generated

Example

Customer reviews, multiple-choice surveys, behavioral data.

Source type

Machine data

Example

IoT sensors, AI logs, and website interactions.

Source type

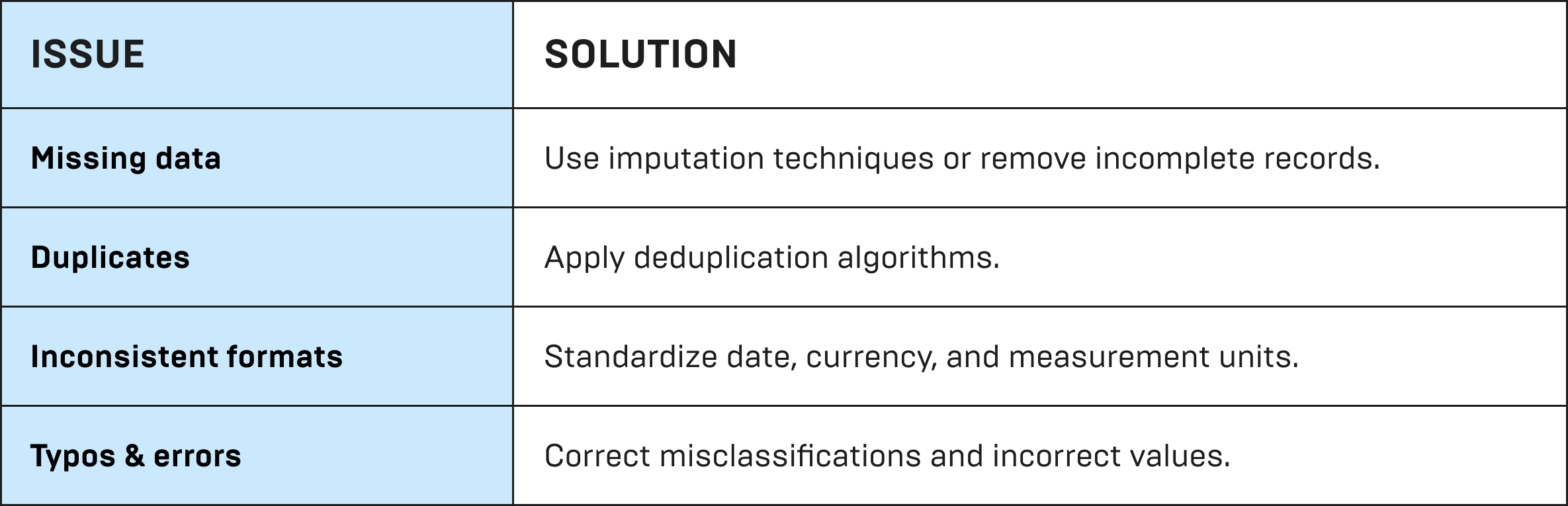

Step 2: Clean & Transform Data

Raw data often contains errors, inconsistencies, and missing values that must be addressed before analysis.

Step 4: Apply Analytical Techniques

Once cleaned, apply the correct statistical or machine learning methods to uncover expert opinions.

Step 5: Interpret & Validate Findings

Before making decisions, insights must be validated and translated into actionable recommendations:

- Cross-Validation: Compare findings with historical data or industry benchmarks.

- Peer Review: Have multiple analysts verify interpretations.

- Real-World Testing: Apply insights in a pilot phase before full implementation.

- Error Margins & Confidence Intervals: Ensure statistical reliability.

Tools for Assessing Data Accuracy & Relevance

Want to know how to evaluate data quality effectively? Below are key categories of evaluation software solutions that enhance data quality.

Data validation tools

These help catch duplicates, missing values, or formatting issues. Popular options include Talend, Great Expectations, and Apache Griffin.

AI-powered tools

AI can automate data cleaning and spot errors you might miss. Tools like Trifacta, Informatica, and Google Cloud Dataprep are commonly used.

Compliance and governance tools

To follow rules like GDPR and HIPAA, businesses use platforms like Collibra and OneTrust.

Reporting and dashboard tools

To make the data easy to understand, tools like Tableau, Power BI, and Google Data Studio turn raw numbers into visuals.

Data Management in Monitoring and Evaluation (M&E)

Good monitoring and evaluation begins with strong data management. This means collecting, storing, checking, and using data properly to support smart decisions. Let’s explore these components.

Accurate data collection program

A reliable data evaluation process starts with easy-to-use digital forms and automated entry to reduce errors and keep records clear.

Safe and organized storage

Cloud tools like Google Cloud, AWS, or Azure keep data secure and backed up. Data warehouses store structured info, while data lakes handle unstructured data.

Checking data quality

Built-in checks can spot missing or duplicate data. AI tools can even catch unusual patterns or errors.

Connecting systems

APIs and ETL processes help move and clean data. Formats like JSON, XML, and CSV make it easier for systems to work together.

Keeping data safe

Strong encryption and access controls protect sensitive data. Following rules like GDPR and HIPAA helps keep things secure and legal.

Turning data into insights

Tools like Tableau and Power BI turn data into clear dashboards and reports. Custom KPIs help track progress and support smart decisions.

Case Study: Improving Data Evaluation with Automated Reporting in the Retail Industry

A strong data evaluation example can be seen in the retail industry, where businesses analyze sales data to improve inventory management and pricing strategies. For example, a Swiss fashion retailer had sales data from Shopify and Prestashop. They needed to bring this data together in one place and make sure it was accurate, and reliable. Through an automated data pipeline with custom validation reports, they:

- Detected and corrected inconsistencies in sales data before generating reports.

- Ensured real-time financial and operational data accuracy, reducing errors in decision-making.

- Reduced manual data processing by 50% through automated ingestion and structured reporting.

- Increased efficiency with self-service analytics, minimizing reliance on IT teams for data validation.

This example highlights how data evaluation processes—such as anomaly detection, validation checks, and structured reporting—enhance accuracy and usability in retail analytics.

Conclusion

Data evaluation is the backbone of smart business decisions. Without it, companies risk costly mistakes, compliance issues, and unreliable insights. However, those who ensure accuracy and consistency make better decisions and stay ahead.

Contact Reenbit today and discover how our data engineering solutions can help you make smarter, data-driven decisions.

FAQ

What is the difference between Data Evaluation and Data Quality Assessment?

Data evaluation checks if data is useful, relevant, and valuable for decision-making. Data quality assessment, on the other hand, looks at how complete, accurate, and consistent the data is. In short: quality assessment checks if the data meets standards, while evaluation checks if it’s actually helpful.

Why is Data Evaluation critical for business analytics?

Data evaluation ensures business decisions are based on reliable insights, preventing costly mistakes. Accurate answers and relevant data improve forecasting, refine analytical models, and enhance operational efficiency.

How can companies improve their Data Evaluation processes?

Companies can enhance data evaluation using automated validation tools, AI-driven analytics, and anomaly detection. Standardizing data collection, conducting regular audits, and training employees in data governance also improve accuracy and reliability.