What Is a Data Pipeline?

In today’s business world, making smarter decisions quickly is essential to maintaining a competitive edge. However, obtaining timely insights from a company’s data may seem overwhelming. With an increasing amount of data and sources daily, including on-premise systems, SaaS applications, databases, and external sources, it can be challenging to bring it all together. One of the solutions for gathering data are data pipelines.

So what is a data pipeline? Data pipelines are a series of actions that transfer data from a source to its destination. The data may be filtered, cleaned, aggregated, enriched, and analyzed in real-time during this process. These pipelines unify data from various sources and formats, making it suitable for analytics and business intelligence. Data pipelines also give team members access to the data they need without requiring access to sensitive production systems.

A Data Pipeline’s Destination

The destination of a data pipeline can vary, depending on the specific use case. Common destinations include data warehouses, databases, and analytical tools such as Tableau or PowerBI. The data pipeline may also be used to populate a marketing platform or user interface.

Tools To Build a Data Pipeline

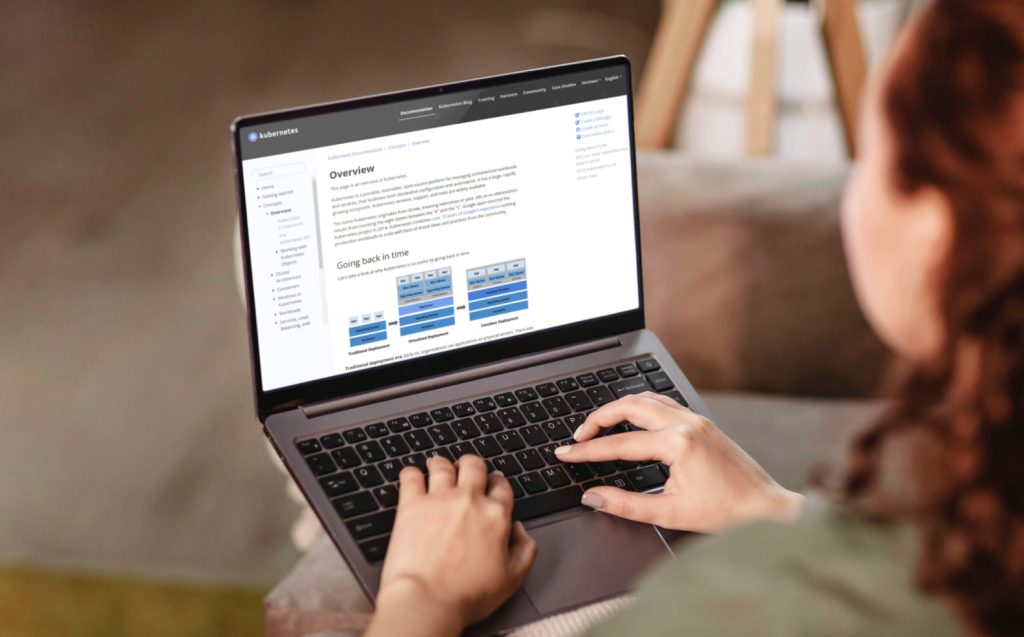

Several tools are available for building data pipelines, ranging from open-source to commercial products. Some popular open-source tools include Apache NiFi and Apache Kafka. Commercial options include Talend, Google Cloud Dataflow and Azure Data Factory. The tool you choose will depend on the specific needs of your data pipeline, including the type of data you are working with and the architecture of your pipeline.

Data Pipeline Types and Use Cases

Basically, we can talk about two main types of data pipelines: batch processing and streaming.

BATCH PROCESSING PIPELINES

Batch processing pipelines are ideal for handling large data sets that do not require immediate attention. They are often used for tasks such as data integration, where data from various sources is consolidated into a single data warehouse. This type of pipeline is also commonly used for business intelligence purposes, where historical data is analyzed to gain insights into past performance.

The main advantage of batch processing pipelines is that they are well suited for handling large amounts of data, making them a popular choice for data warehousing and business intelligence applications. They also provide a high degree of control over the processing of data, allowing for complex transformations and manipulations to be performed.

STREAMING PIPELINES

On the other hand, streaming pipelines are designed to handle real-time data. These pipelines are commonly used in applications that require real-time processing, such as fraud detection or event-driven applications. For example, streaming pipelines can detect fraudulent transactions in real-time, allowing organizations to respond quickly to events and make proper decisions in real time. This type of pipeline also provides a high degree of flexibility, allowing for real-time data processing from various sources, such as databases, messaging systems, and sensors.

Data Pipeline Architecture

- Data Collection is the first step in building a data pipeline and involves gathering data from various disparate sources, such as internal systems, external sources, CSV files, and more.

- Data Transformation is the next step in the data pipeline process, where the data is transformed into a common format for easier use and analysis. This may include cleaning, normalizing, and enriching the data.

- Data Loading is the step where the transformed data is loaded into a destination such as a data warehouse, database, or analytical tool.

- Data Management is the final step in the data pipeline architecture and involves monitoring and maintaining the pipeline to ensure that data flows correctly and any issues are promptly addressed.

Functional Data Pipeline Benefits

There are several benefits to having a functional data pipeline, including the following:

BUSINESS AUTONOMY

With a data pipeline in place, businesses have more control over their data and can make decisions based on real-time data insights. This gives businesses greater autonomy, enabling them to make informed decisions without relying on external data providers.

BUSINESS ANALYSIS & BI

Data pipelines make it easier for businesses to access and analyze large amounts of data, providing valuable insights that can be used to make better business decisions. With a data pipeline, companies can easily integrate data from disparate sources and perform complex data analysis without requiring manual data entry or error-prone data transfers.

PRODUCTIVITY

Data pipelines automate moving data from one place to another, freeing up time and resources that may be better spent on other tasks. With a data pipeline, data is automatically collected, transformed, and loaded into the destination, reducing the need for manual intervention and increasing overall productivity.

DATA SECURITY

Data pipelines help to ensure the security and privacy of sensitive data. By automating data transfer, data pipelines diminish the risk of data breaches evolved by human error or malicious intent. Additionally, data pipelines can be configured to encrypt data in transit, ensuring that sensitive data remains secure.

DATA SECURITY

Data pipelines can also help organizations to comply with some specific industry regulations and standards, such as GDPR or HIPAA. By automating data transfer, data pipelines reduce the risk of non-compliance caused by manual data transfers or human error.

DATA MANAGEMENT

Data pipelines simplify the process of managing large amounts of data, making it easier to store and access data in a centralized location. With a data pipeline, data is stored in a standard format, making it easier to search, query, and manipulate data as needed. This helps organizations keep their data organized and accessible, reducing the risk of data loss or corruption.

How to Build an Efficient Data Pipeline

Building an efficient data pipeline requires careful planning and consideration of several vital components, including:

-

Data Sources

The very first step in building a data pipeline is identifying the data sources. This can include internal databases, CSV files, external APIs, or data from marketing platforms.

-

Data Destination

The next step is to determine where the data will be stored. This can be a cloud platform, such as Azure, Amazon Web Services or Google Cloud Platform, or a local database. The data destination should be chosen based on the organization’s needs, including factors such as cost, scalability, and data security.

-

Pipeline Tools

Once the data sources and destination have been determined, the next step is to choose the tools used to build the pipeline. Various pipeline tools are available, including ETL tools, data engineering platforms, and cloud-based data pipeline services. The choice of tool will depend on the organization’s needs and the pipeline’s complexity.

Data Pipeline Best Practices

When building a data pipeline, it’s essential to follow best practices to ensure it is efficient, scalable, and secure. Some of the best practices for building a data pipeline include the following:

Plan and design the pipeline before implementation

Before building a data pipeline, it’s crucial to take the time to plan and design the pipeline. This includes determining the sources of data, the data destination, and the tools that will be used. This will help ensure that the pipeline is built with the right components and meets the organization’s needs.

Automate as much as possible

Data pipelines should be designed to automate the data transfer process as much as possible. This will help to lower the risk of human error, improve efficiency, and ensure that the pipeline is scalable.

Use the right tools for the job

Selecting the right tools for the job is critical to building a successful data pipeline. It’s important to choose tools that can handle the volume and complexity of data that the pipeline will need to process.

Regularly monitor and maintain the pipeline

Data pipelines require regular maintenance and monitoring to ensure they function properly. This includes monitoring data quality, checking for errors, and making necessary updates and improvements.

Encrypt sensitive data

Data pipelines should be designed to encrypt sensitive data in transit to ensure that data is secure. This includes using secure protocols, such as SSL, and using encryption keys to encrypt data at rest.

Final thoughts

In conclusion, a data pipeline is crucial in today’s data-driven world. It streamlines the data transfer process and transforms, cleans, and organizes the data for better analysis and insights. With the right tools, expertise, and well-designed architecture, organizations can leverage the full potential of their data and gain a competitive edge in their respective industries.

At Reenbit, our team of expert cloud engineers offers comprehensive services to help organizations build and maintain their data pipelines. With years of experience in cloud platform integration, real-time data processing, and data management, we can help you to design and implement a data pipeline that meets your specific needs and requirements.

If you’re ready to take your data management to the next level, request a quote today and see how we can help you to transform your data into actionable insights. Our team is dedicated to delivering outstanding results and providing unparalleled support, so you can focus on driving growth and success for your organization.